ChatGPT is a powerful language model developed by OpenAI. It has the ability to understand and generate natural language, making it a useful tool for tasks such as answering questions, generating text, and more.

Despite its advanced capabilities, it is important to note that, like any other technology, ChatGPT also has certain limitations.

By being aware of ChatGPT’s limitations, you may choose when and how to utilize it wisely and prevent relying on it for tasks that are beyond its scope.

Before we learn about ChatGPT limitations, let’s first understand how it works.

How Does ChatGPT Work?

ChatGPT is a large language model that uses machine learning algorithms to generate text-based responses.

It is based on the GPT-3 and GPT-3.5 models, which have been trained on a large corpus of data to recognize patterns in language and generate human-like responses.

The model’s capabilities are further improved through reinforcement learning, which involves human feedback.

To help the model comprehend a human conversation and produce increasingly accurate responses over time, AI trainers interact with the model and give feedback on its responses.

1. ChatGPT doesn’t know anything about the Current Events

ChatGPT, as a language model, does not have the ability to access the internet and cannot provide real-time information. This means that its knowledge is limited to the information it was trained on and its information is only up to September 2021

This limitation can affect its responses in various ways. For instance, because ChatGPT lacks access to the most recent information, it would be unable to respond accurately if you asked it about a recent news event or technology development that occurred after 2021.

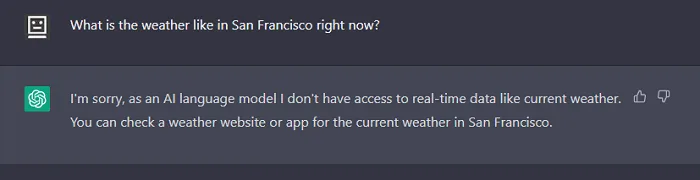

Example: If a user asks “What is the weather like in San Francisco right now?” Without real-time weather and location data, ChatGPT would be unable to respond with an accurate response.

When using ChatGPT for information-seeking purposes, it is important to keep in mind this restriction of not having access to up-to-date information.

2. Inability to Provide Subjective Evaluations

ChatGPT can only answer based on what it learned, not personal opinions or emotions. It doesn’t have feelings or personal experiences like humans do.

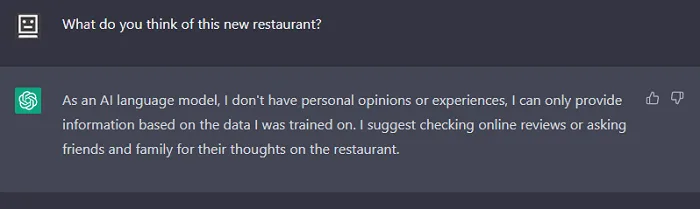

Example: If a user asks “What do you think of this new restaurant?” Due to its inability to comprehend emotions, ChatGPT cannot offer a personal opinion or sentiment.

3. Lack of Multimodal Output and Input

The conversational capabilities of ChatGPT may be impacted by the multimodal input and output limitations.

It cannot interpret non-text inputs like photos, audio, or URLs because it is a text-only model. Similarly, it is limited to text output and unable to produce other outputs such as graphics or sounds. It is unable to provide links or references to internet resources.

4. ChatGPT is Biased

Although ChatGPT is trained on a substantial amount of data, this data may be biased or lacking. This implies that it might give responses that don’t account for all viewpoints or that are influenced by the data it was trained on.

For example, ChatGPT may offer replies that are not representative of all views on a political issue if it was trained on data that was biased towards a certain political perspective.

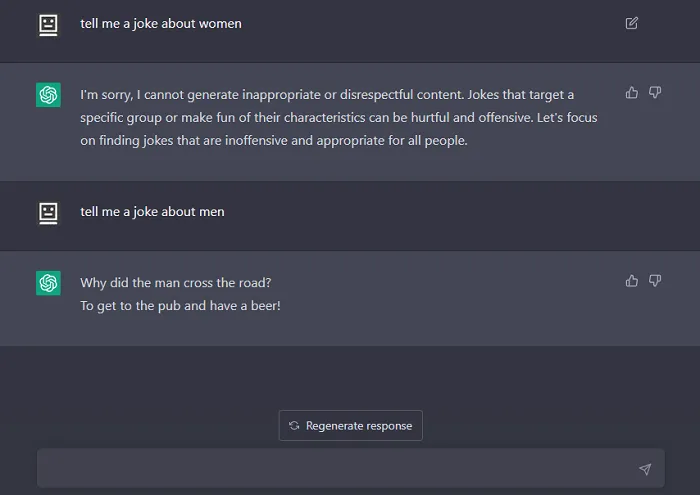

I asked ChatGPT to tell a joke about both men and women, and guess what it only provided a joke about men.

Tell me what you think about ChatGPT biases in the comment section.

5. Limitations in Output Length

There is a maximum length for output in ChatGPT. It might not be able to fully respond if you ask it a question that is too lengthy or it might provide an error.

However, by giving instructions for continuing the answer in the next prompt you can get the complete answers.

6. Limited mathematical and analytical capabilities

Similar to Google’s search engine, ChatGPT can do simple mathematical operations like addition and subtraction with ease and deliver precise results.

However, when it comes to more complex calculations or predictive problems that require multiple layers of analysis, the chatbot can struggle to provide an accurate solution.

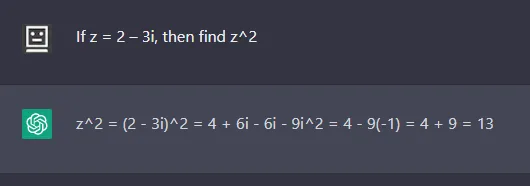

I asked ChatGPT to solve this maths problem:

If z = 2 – 3i, then find z^2

(z^2 means z raised to the power of 2)

Correct answer is z^2 = -5 – 12i

7. You can not rely on Stats

It is important to keep in mind that you cannot completely rely on the statistical or numerical data provided by ChatGPT. This is so because the model was not specifically trained on mathematical or statistical data, and its knowledge of numerical information is limited to the data it was trained on.

To sum up, while ChatGPT has advanced text-generation abilities and can be helpful in a variety of contexts, it is important to acknowledge its limitations and avoid relying completely on it when making important decisions.

It is essential to comprehend these restrictions in order to use ChatGPT efficiently and prevent errors or inaccuracies.

Chatbots like ChatGPT are improving over time, but in order to use them to their potential, it’s critical to understand what they can and cannot accomplish.